A tale of two black boxes: Deep Learning Models and Human Cognition

Matan Fintz# and Uri Hertz - Department of Cognitive Sciences

Margarita Osadchy - Department of Computer Sciences

Machine Learning

Deep learning

Neural Networks

Psychology

Seed Grant 2020

A black box is a term used to describe a process whose internal operations and mechanisms are not accessible to us.

Human cognition, the way we process information, perceive and learn about the world, and make decisions, has long been such a black box.

Deep neural networks (DNN) models, which are an extremely useful tool across multiple domains, are also black boxes, as we don’t have control and

insight on the way they operate.

While understanding the internal operations of such black boxes is extremely important, it is not clear how does one go about studying such black box

processes?

Cognitive scientists and neuroscientists have been using experiments and explicit computational models based on theory to study cognitive processes.

For example, theory of value based learning and utility maximization are used to explain and predict human decision making and choices.

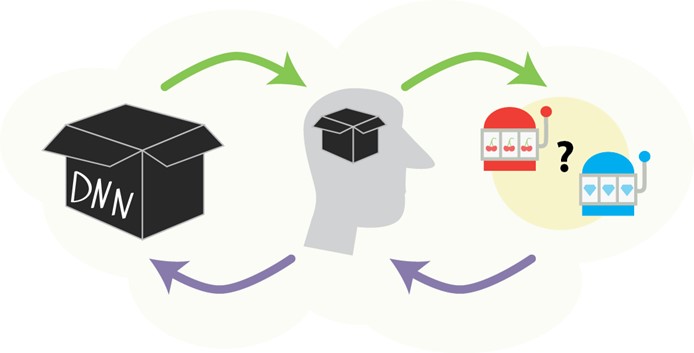

We show that a DNN model can greatly aid at studying the black box of human cognition by uncovering hitherto overlooked aspects of human decision making. We then show that to interpret the internal workings of the DNN model, the second black box, we can set up experiments and use the cognitive theory-based models.

This novel relationship between theory-driven and data-driven approaches, where DNN models are used to explore beyond the scope of established cognitive models in studying human cognition, and theory-driven models are used to explain and characterized DNN models internal operations, is important both in making DNN models a powerful scientific tool, and in understanding and interpreting DNN models, using one black box to study the other.